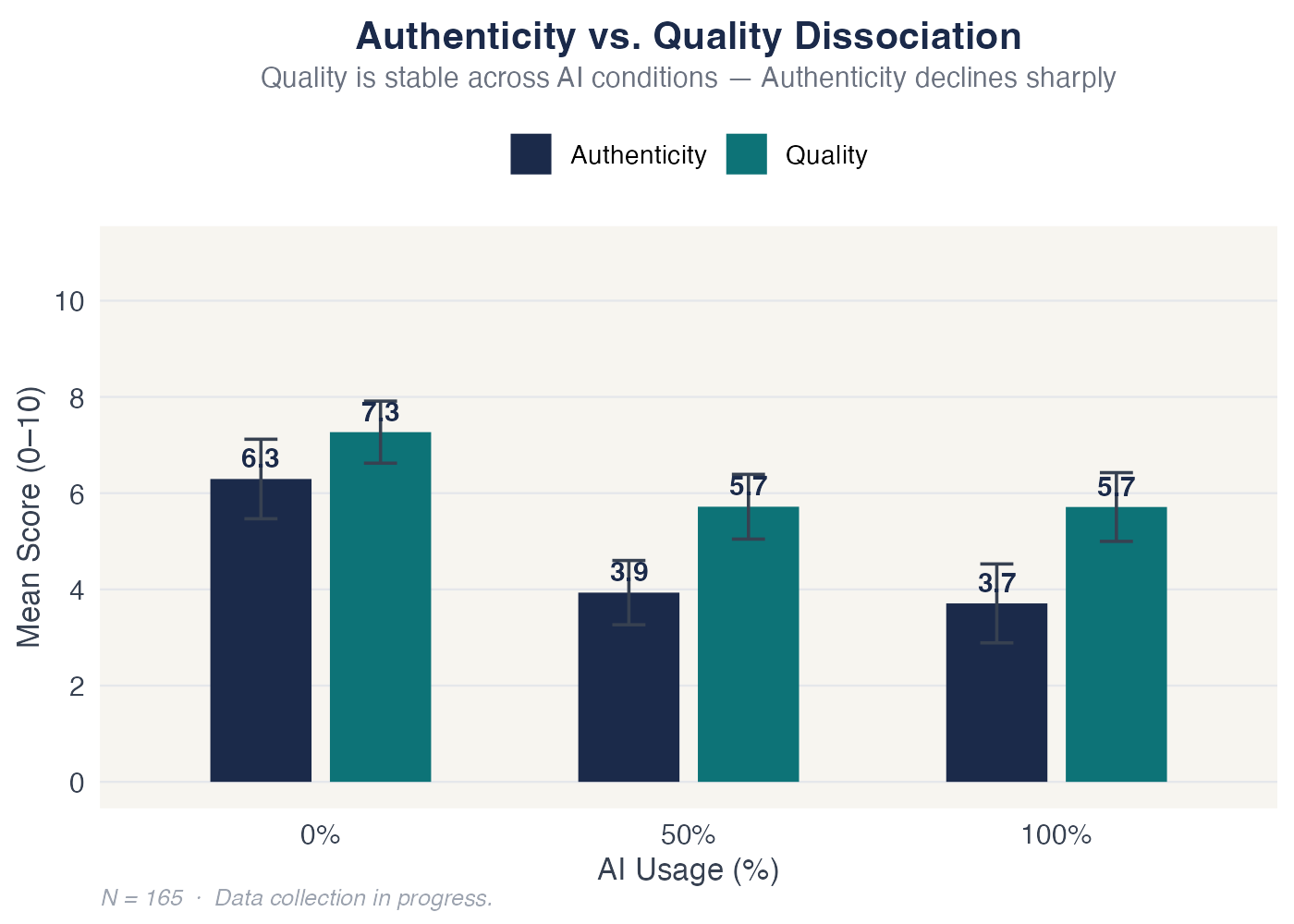

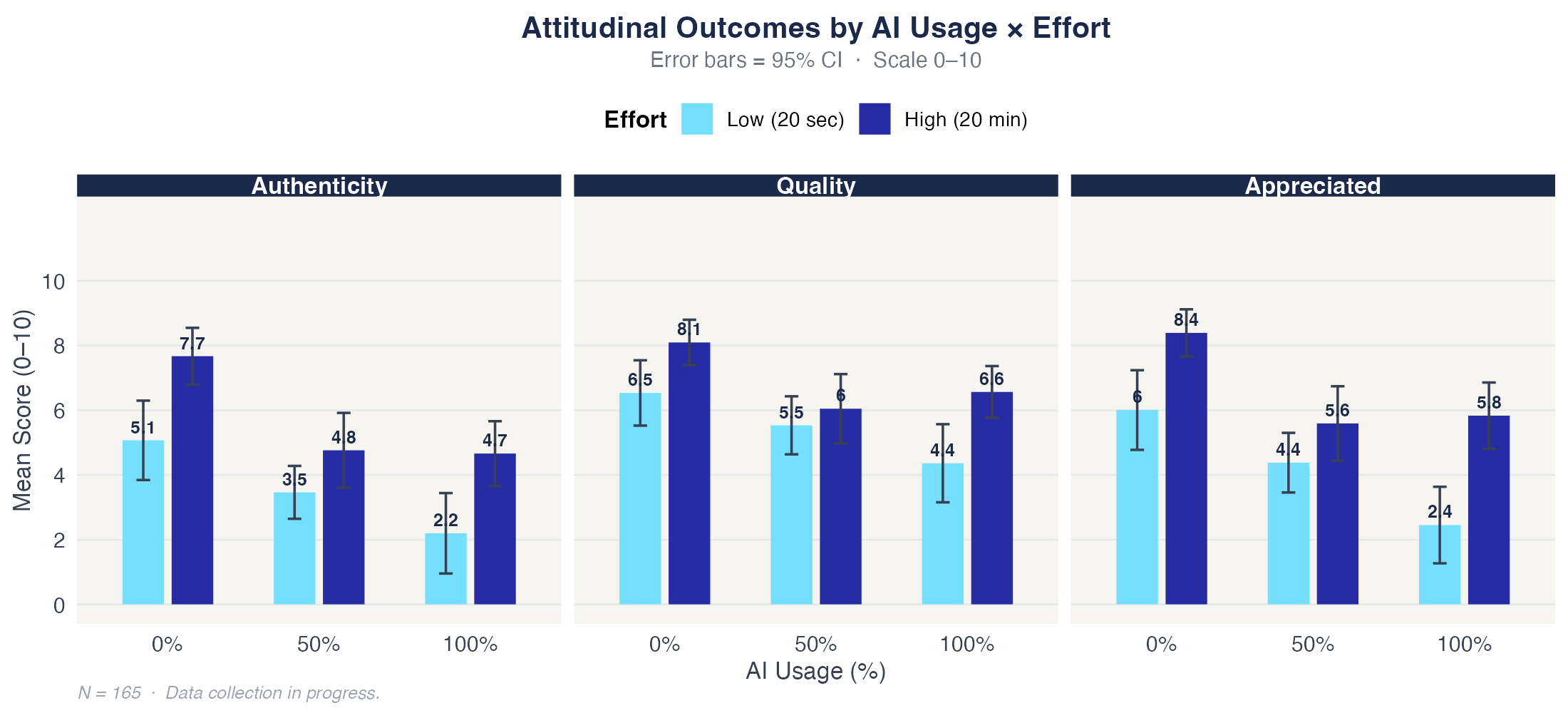

Any AI use

contaminates authenticity.

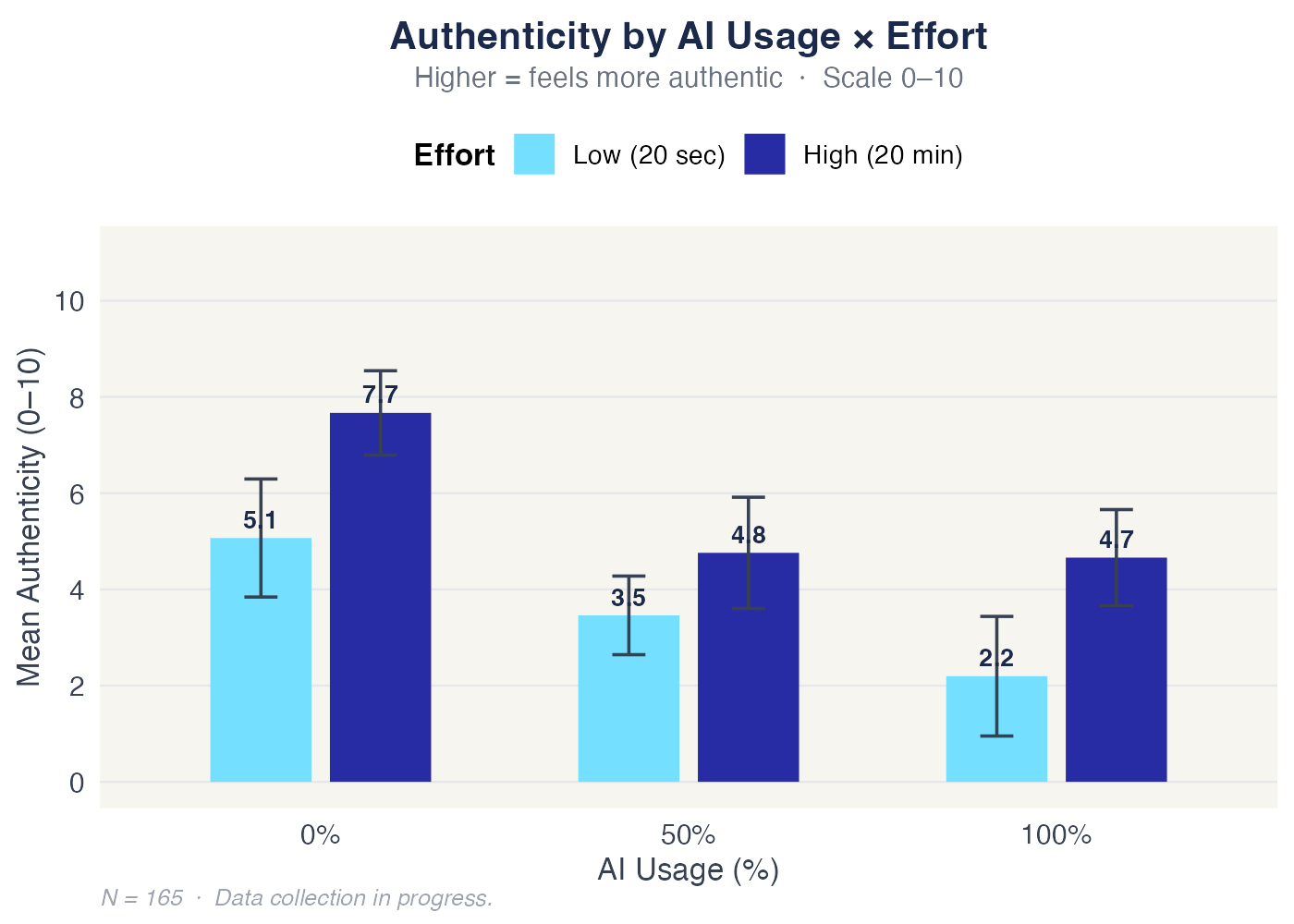

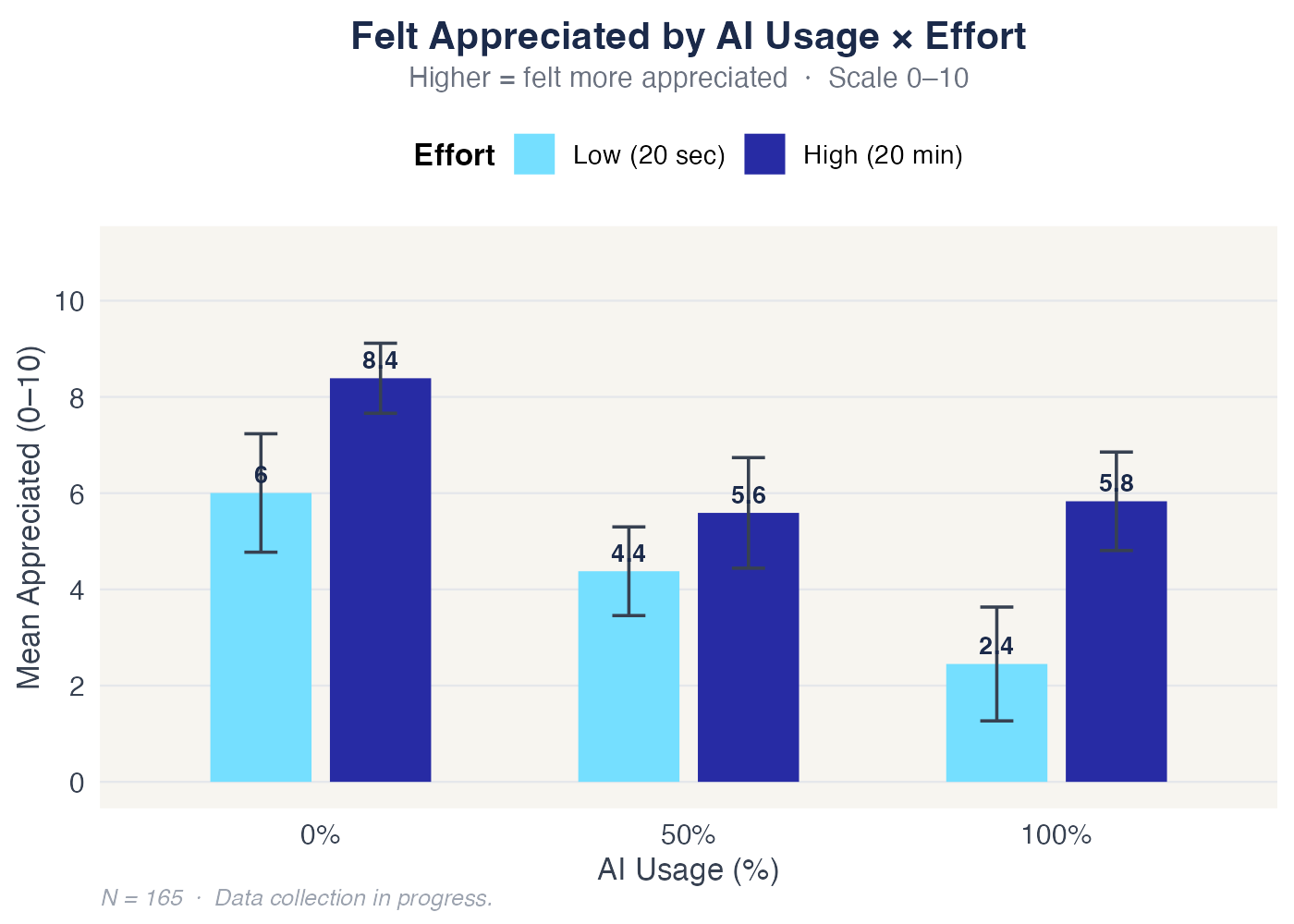

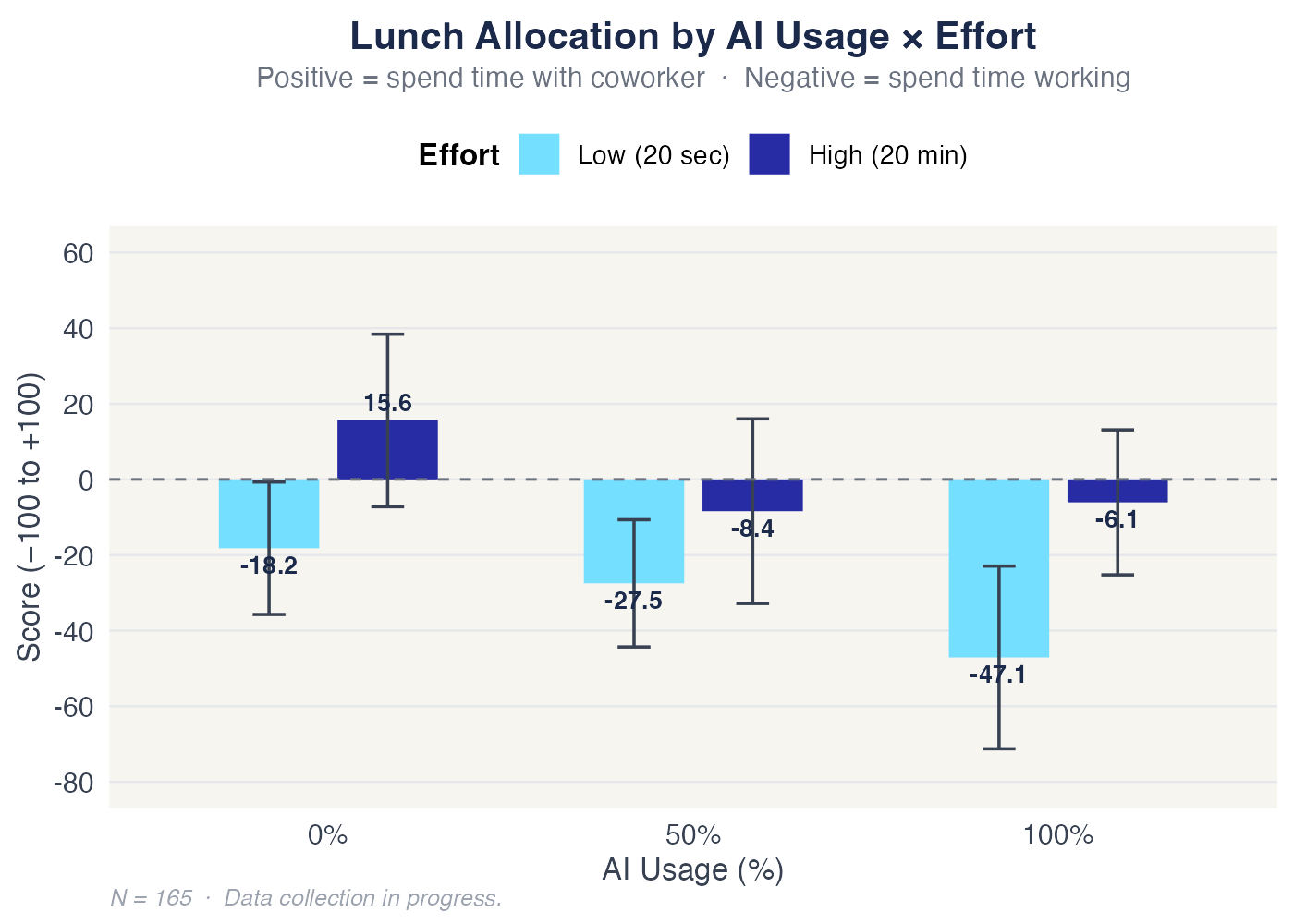

Going from 0% to just 50% AI causes a larger drop in perceived authenticity than almost any other factor — regardless of how much effort was disclosed. The floor drops immediately.

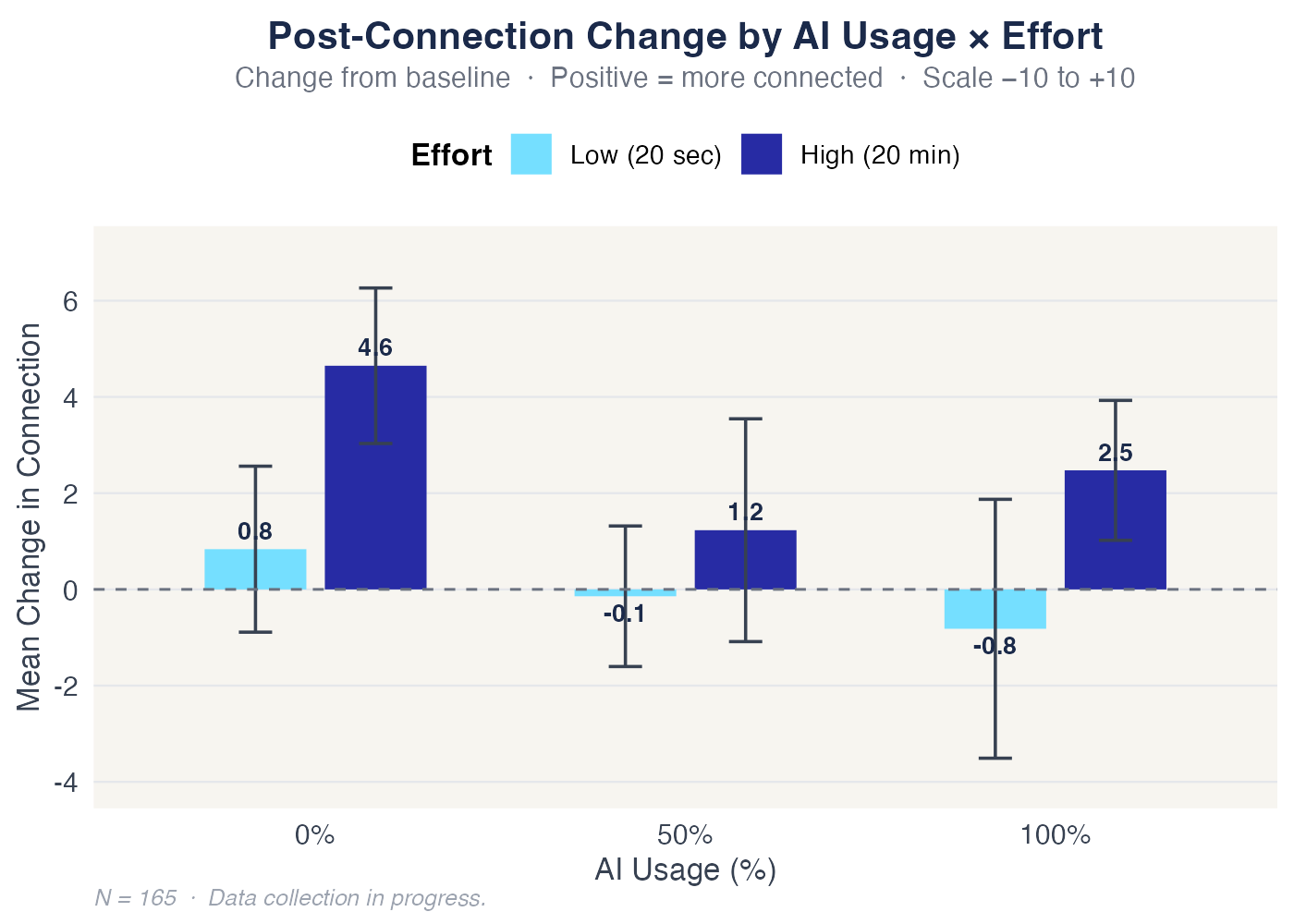

Even 20 minutes of disclosed effort can't bring a 100% AI note back to where a careless human starts. The contamination is immediate.